Reinforcement Learning For Business: Real-Life Examples

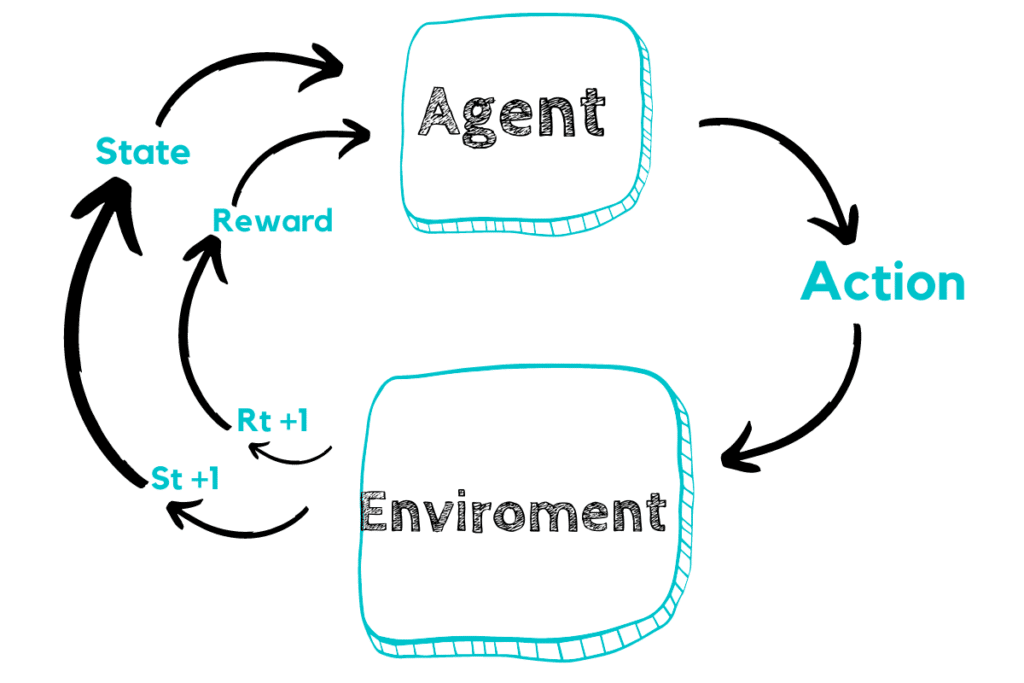

Among many other deep learning techniques, Reinforcement Learning (RL) and its popularity have increased. A lot of the buzz about reinforcement learning was initiated thanks to AlphaGo by Deepmind. AlphaGo was developed to play the game Go, or rather, a very complex version of it. The essence of Reinforcement Learning is based on learning through environmental interaction, as well as through adapting to, learning from, and calibrating future decisions based on mistakes. Reinforcement learning is based on a delayed and cumulative reward system. In this system, an agent reconciles an action that influences a state change in the environment. When similar circumstances occur, the system recognizes the best decision to be made based on the experience of previously recalled actions.

The intended application of Reinforcement Learning is to evolve and improve systems without human or programmatic intervention. It creates an interesting dynamic among real-world applications, such as autonomous vehicles. Autonomous driving is a tough puzzle to solve, at least not using solely conventional AI methods. It uses Convolutional Neural Networks (CNNs), which in turn utilize computer vision. Due to the strong interaction with the environment, including pedestrians, other vehicles, road infrastructure, road conditions, and driver behavior, autonomous driving cannot be modeled just as a supervised learning problem. If viewed abstractly, autonomous driving agents call for implementing sequential steps formed from three tasks: sensing, planning, and control.

Curious about what is reinforcement learning in ML? Read our previous article, where we briefly covered machine learning in cybersecurity and touched on reinforcement learning.

What is reinforcement learning with example?

Reinforcement learning in healthcare

The nature of many medicinal decision problems is sequential. As a patient sees a doctor, a treatment plan is decided upon. Then, once the plan’s points are administered, the treatment’s result will dictate the next logical action for future treatment. Modeled as an MDP, this type of decision problem can be addressed by leveraging RL algorithms.

The problem with AI systems is that they exclusively act on the patient’s current state rather than considering the sequential nature of past decisions. Reinforcement Learning takes into account not only the treatment’s immediate effect but also takes into account the long-term benefit to patients.

While using Reinforcement Learning in medicine is appealing, there are some challenges to overcome before applying RL algorithms to be used at hospitals. Many of the learned decisions of Reinforcement Learning are based on trial-and-error, an exploratory practice that is not a viable option. Therefore, RL would need to learn practices based on data existent thanks to the collection of fixed treatment strategies. This ‘off-policy’ strategy of learning, therefore. Play an important role in a setting such as one that includes the practice of medicine.

Another key factor in determining the optimal policy is determining the reward. Making this determination in the medical field involves weighing factors such as a patient’s life expectancy against the cost of a particular treatment. This dilemma is already under serious discussion in multiple countries.

There is already literature for several examples of Reinforcement Learning applications, counting treatments for lung cancer and epilepsy. In the case of sepsis, deep RL treatment strategies have been developed based on medical registry data.

One issue that is uniquely suited as a sequential decision-making one in nature is in nephrology. Specifically, it applies to using erythropoiesis-stimulating agents (ESAs) in patients with chronic kidney disease. However, since the effects of ESAs are unpredictable, the patient’s condition should always be closely monitored. Depending on the patient’s current state, the medical team must decide which action to take next. This decision will then affect the patient’s future condition. For this reason, multiple authors have pushed for the idea of utilizing RL to control the administration of ESAs.

Reinforcement learning in security

Reinforcement Learning has also shown potential for improving security in various apps. For example, researchers have used RL to enhance the security of computer networks. Using reinforcement learning cyber security, an agent can learn to detect and respond to network attacks in real-time. This approach can help to prevent cyber attacks and minimize the damage caused by them.

Reinforcement Learning can save and speed up your internet connection using practical apps. For a better understanding, it helps to go through the admin panel of your network called 192.168.1.1, an IP address specified by router companies. Logging on to this address permits access to the router company’s dashboard. From here, you can optimize your network’s integrity and speed.

The availability of such abstract libraries as Keras democratizes deep learning adoption. The mathematically complex concepts stored in these libraries allow you to develop models for optimal operations, highly customized and parameterized tuning, and model deployment.

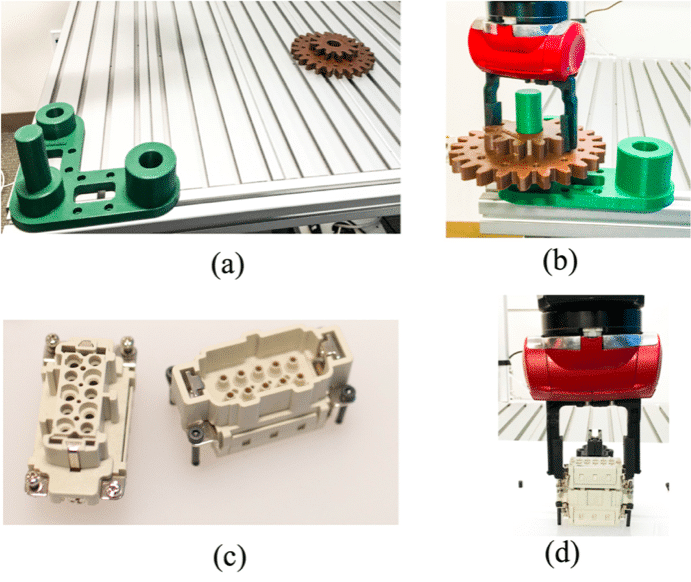

Reinforcement learning in robotics

Logical automation propelled by reinforcement learning also takes place in production factories. Robots perform many redundant duties, but some also use deep reinforcement to learn how to perform their designated tasks with the most efficacy, speed, and precision. Take, for instance, the operational robot at the Japanese-run company Fanuc. The industrial robot is clever enough to train itself to perform a particular job, making it the pride of the company’s manufacturing hand.

As the robot performs a particular task with an object, it captures the action on video. The robot then use this type of ‘memory’ to influence future actions with this object. Whether the performance of the task captured in video footage is successful or not, the robot ‘learns’ from it. This all is part of a deep learning model that controls and influences the robot’s future actions.

Reinforcement learning for customer delivery

The goal of any manufacturer that sells products to customers is to serve their demand, delivering said products to the customers’ possession quickly and safely while minimizing the costs of doing so. These savings help the manufacturer’s business thrive by increasing profit margins. The manufacturer participates in Split Delivery Vehicle Routing to engage in timely product distributions.

In such systems, agents communicate and cooperate with each other by leveraging reinforcement learning techniques. Using Q-learning, a system is developed to serve multiple customers using just one vehicle. By reducing the number of trucks used to deliver products to customers and optimizing execution time, the manufacturer benefits in cutting costs, improving delivery efficiency, and increasing profit margins.

Reinforcement learning for e-commerce

One of the advantages of RL is that it can learn from customer interactions and adapt to changing preferences and behavior patterns. This makes it an ideal solution for businesses that want to improve their customer experience and increase sales.

One example of reinforcement learning e-commerce is product recommendation systems. These systems use RL algorithms to learn from customer behavior, such as search queries, clickstream data, and purchase history. By analyzing this data, the system can make personalized product recommendations to customers, increasing the likelihood of a sale.

Another example is dynamic pricing. RL algorithms can learn from historical sales data and adjust prices in real time based on demand and inventory levels. This can help businesses maximize revenue and profit margins while remaining competitive in the market.

RL can also be used for customer retention and loyalty programs. By analyzing customer behavior and preferences, businesses can create personalized incentives and rewards that encourage customers to return and make additional purchases. This can help to build long-term relationships with customers and increase customer lifetime value.

Reinforcement learning for trading

Reinforcement learning promotes maximizing the business’s benefits, end-to-end optimization, and helping frame the parameters the business operates under to achieve the best possible result. When there is a ‘negative reward’ as sales shrink by 30%, for instance, the agent is often forced to reevaluate their business policy and potentially consider a different one. Using reinforcement learning to deal with such crucial situations by creating simulations. These simulations can manifest scenarios with a negative reward for an agent, which will, in turn, help the agent come up with workarounds and tailored approaches to these types of situations. Repeating the process of similar strategy adjustments based on RL over time will permit the agent the ability to perpetually keep auto-tuning their operation to adjust to any downturn or problem that may arise.

At IBM, a sophisticated system built on a DSX platform makes decisions on financial trades by harnessing the power of reinforced learning. The reinforcement learning stock trading model uses stochastic actions during every trade step in the historical context of stock price data. These actions are then used as the appropriate reward function based on either a loss or profit gained from each trade.

Further evolution of modeless programming with RL is an indispensable factor to move away from rule-based AI programming. It is a complex process to adjust to and therefore is certain to encounter issues along the way. The RL neural networks have very high training data requirements that require significant time and resources to gather enough relevant data to build and analyze new scenarios and conditions for evaluation. For this reason, collecting the data needs to be autonomous.

The goal is always to improve the accuracy of predictions using modern simulation methods and to create virtual miles. GANs (Generative Adversarial Networks) are key technologies that allow the simulation of synthetic data collection to be used in the mainstream. GANs essentially compete or duel networks, set up to oppose each other, one acting as a generator, the other as a discriminator. As parts of the neural net, the generator creates the data, and the discriminator tests it for authenticity. As time goes by, the generator learns to create data so seamlessly that the discriminator can no longer reconcile which data is real and which is fake.

The skills that enable feature engineering to reshape data using domain knowledge are in short supply, an aspect that predictive models rely entirely on to be effective. Certain AutoML platforms are already smart enough to remove the noise and discard weaker features of processes. The use of their ensembles of varying models remains pivotal. After all, to predict real-world problems, a set of predictor models must be able to consider and include a little bit of everything.

Reinforcement learning for banking

Starting in the front office, using virtual agents in customer service has become indispensable. Digital assistants, chatbots, or voice systems slip into the customer service or sales representative role to automate the dialog in customer service. Facebook chatbots allow its customers to transfer money or navigate through product details. They support you in the online loan application process or in the search for branches and exchange rates. In addition to chatbots, the technology is also used in Robo-advisory applications, e.g. portfolio management, securities advice, or stock and bond trading.

In addition, the automation of business processes can reduce costs and support employees in routine tasks, allowing them to focus on strategic and analytical topics. Unsupervised learning in regulatory reporting can help, for example, identify and minimize data quality issues in data sets by using the algorithm to carry out root-cause analyses. Data management is optimized through a more holistic picture of customer and transaction activities based on deep learning.

In-depth data analysis enables banks to generate richer insights from their business processes, improving and accelerating decision-making. Reinforcement learning is used, for example, in risk management for credit checks. Furthermore, machine learning models can identify and reduce risks in the fight against fraud. With the help of supervised and unsupervised learning, alerts in customer behavior are examined, and the likelihood of corporate bankruptcies is predicted. And there are no limits to creativity: With social media analytics, social media data can also be used as a source for crisis forecasts.